Software-defined Data Center is no longer a “marketing term” as many would make you believe.

Rather, it is a complete framework for building your next software-based data center backed by standards.

So it is important to understand a Software-defined data center ( SDDC)! But that’s not the only reason.

The other reason is the confusion out there in knowing the difference between SDCC and cloud.

Yes !

Many do not know if their applications are in SDCC or in the cloud. Whether they are establishing a cloud or SDCC.

And many still think the “Data Center”, as an enclosed four-walled structure housing communication cabinets within our “access”?

However, the data center has evolved since then. Applications have moved beyond a certain location to cloud somewhere else beyond our access. A Software-Defined data center is NOT just on-premises but has access to public (public cloud services) or works as a hybrid cloud.

So I thought let me comprehensively cover all these topics in a “Software-Defined Data Center tutorial” for you.

For example:

I know a lot of you will be interested especially in the last item in the table of contents, I am keeping it intentionally in the end as you can appreciate the differences once you know exactly what is SDDC. So you if know what is SDDC you can jump straight to the end, else you can follow the sequence of this blog.

What is Software-defined Data Center ?

SDDC started as a marketing term by one of the vendors back in 2012, however, it has taken off considerably after that with one of the standard body DMTF involve to defined the relevant standards related to Open Software-defined data center.

According to DMTF open Software-defined Data center is defined as follows:

“A programmatic abstraction of logical compute, network, storage, and other resources, represented as software. These resources are dynamically discovered, provisioned, and configured based on workload requirements. Thus, the SDDC enables policy-driven orchestration of workloads, as well as measurement and management of resources consumed”

There are other definitions of SDDC also:

One of the very concise one is from SearchConvergedInfrastructure.com:

“A data storage facility in which all infrastructure elements—networking, storage, CPU, and security—are virtualized and delivered as a service. Deployment, operation, provisioning, and configuration are abstracted from hardware”

Before we dig deeper into SDDC, it makes sense to know the difference between the traditional data center and SDDC

Difference between Traditional data center and Software-defined data Center

Traditionally data centers use physical infrastructure like physical servers, switches, and storage resources. Their scalability is located individually to each hardware element on site.( servers, switches, firewalls, storage systems, etc). Software-Defined Data Center uses “virtualization” to abstract all these hardware resources on-site providing highly scalable, efficient, and portable virtual compute, networking, networking, and security.

To understand SDDC, understanding Virtualization and Hypervisor is a MUST

With virtualization, we make a software version of something like compute, storage, and networking applications.

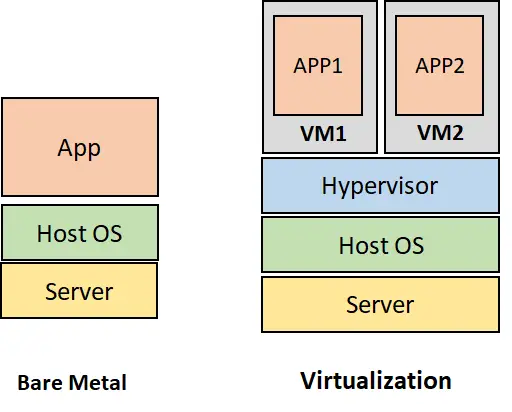

What makes virtualization feasible is the “Hypervisor”

The hypervisor is a piece of software that runs on top of a server. It divides the resources of the physical resource and allocates them in the virtual environment. So with the hypervisor, we can turn a physical server into virtual machines ( VMs) with dedicated CPUs, memory, and operating systems.

Once we have the VMs, Instead of having one application on the physical server, we can have multiple applications on the same server resulting in efficiency and cost savings. This is also called virtualization.

Components of Software Defined Data Center

Software Define Compute ( Compute virtualization)

This is the first step towards the SDDC and is also explained under the hypervisor above; this is also called server/compute virtualization or physical hardware virtualization. This lets you run virtual servers on top of a physical server. In simple terms, the CPU and memory of the physical server is allocated to the virtual server

Software Define Network ( Network Virtualization)

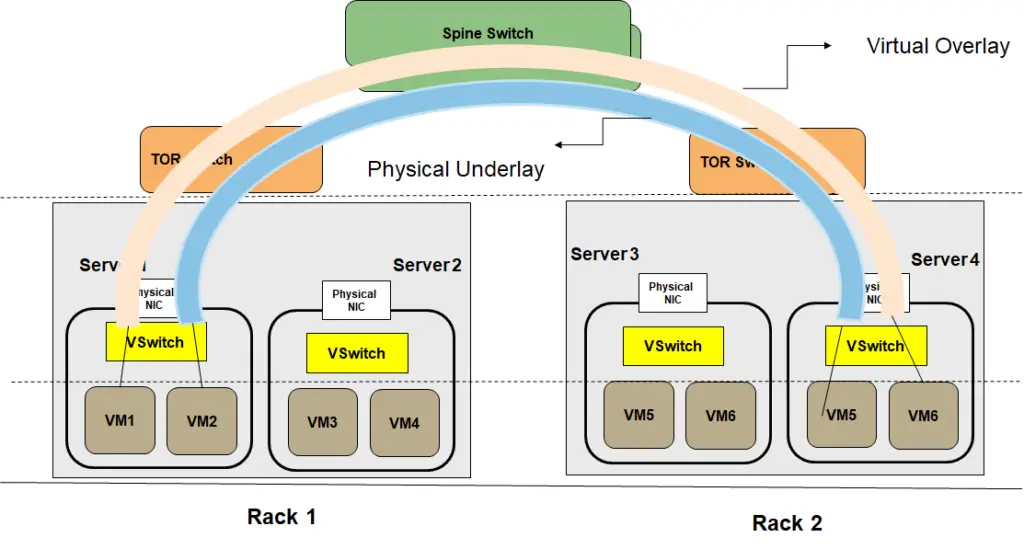

The software-Defined networking enables network abstraction and lets you provision and run networks independent of the hardware networking components. One of the challenges with the growth in virtual machines is that the current networks do not facilitate the migration of VMs from one DC to another DC. The IP addresses of VMs are tightly coupled to the physical networks which makes migration very complex. To solve this issue, network virtualization enables virtual overlays that run on top of the physical network/underlay. . This overlay enables hiding of the IP addresses from the physical underlay network thus making the migration of VMs, a breeze. In addition network virtualization brings flexibility and open doors for innovation as new services can be launched without any dependence on the upgrade of the networking hardware

Software Defined Storage ( Storage Virtualization)

Software-defined storage separates storage software from its hardware. SDS runs on industry-standard x86 servers versus the traditional NAS or SAN systems. Decoupling storage software from hardware enables a lot of flexibility. The storage capacity can be easily expanded as there is a need for expansion.

Storage has come of age. Traditional monolithic storage is sold as a bundle of industry-specific hardware and proprietary software. With the SDS, there is no need for specific hardware, also the SDS adds a software layer between the physical storage and the data request, This enables the use of APIs to manage and maintain the storage of devices. The storage can be scaled out easily while automation can bring the costs down.

Automation and Orchestration layer

Simply virtualizing functions is not enough. With so many moving pieces in an SDDC, it is mandatory to have a robust automation and orchestration layer. Automation refers to automating a single task like spinning up a VM while orchestration refers to automating a collection of tasks in a certain sequence like spinning up a VM, assigning an IP address then creating a virtual network, etc. A central Orchestration and automation layer can be used to efficiently allocate resources, configure them, update them, monitor operations and take autonomous actions based on close loop controls.

SDDC Architecture:

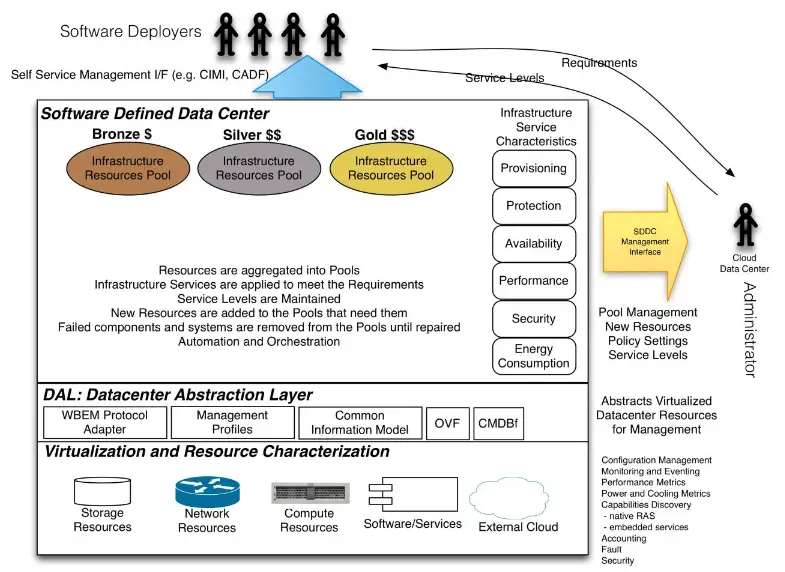

SDDC architecture as provided by the DTMF is shown in the figure below.

Few of the points related to the architecture

1. At the lower layer is the resources. The resources shown are storage, network, and compute. There can be other software and services ( for example security components like firewalls, IPS, IDS to facilitate security as a service) in addition to the external cloud, which can be a public cloud.

2. One of the most important layers is the “DAL” i.e Datacenter Abstraction layer. The DAL layer abstracts the resources towards the users at the upper layers. This abstraction is done in the standard way providing standard APIs. For example, DTMF has defined the common information models, CMDBf, and OVF formats.

3. The management of the resources is done through SDDC management automation software that has an end-to-end view of the resources. The management interface is defined in CIMI ( Cloud infrastructure Management Interface)

Benefits of SDDC

Costs

Resource Pooling helps save costs. Instead of buying individual servers and networking hardware, which can over-dimension the hardware, the same hardware can be partitioned using virtualization. Multiple VMs, for example, can be hosted on a single server instead of spinning up a server for each new application.

Scalability and Elasticity

Seamless ability to scale the infrastructure as and when desired. Elastic resources to scale up and scale-out on-demand brings high scale scalability

Agility & Automation

The time to provision services is decreased. It does not take days and months to provision a server, an application, and configure networking. All are software-based which can be done instantaneously. Virtualization combined with automation/orchestration is a real-time saver and opens the door for innovation. The automation layer can be used to efficiently allocate resources, configure them, update them, monitor operations and take autonomous actions based on close loop controls.

APIs & Programmability

Simplified data center management is another benefit. There are common information models through which resources can be programmed facilitating services management through a single dashboard to internal or external parties.

Software-defined Data Center vs Cloud

In order to understand this difference, it is important to refresh the definition of cloud.

What is Cloud?

Let’s take the definition of cloud according to NIST

“cloud computing is a model for enabling ubiquitous, convenient, on-demand network access to a shared pool of configurable computing resources (e.g., networks, servers, storage, applications, and services) that can be rapidly provisioned and released with minimal management effort or service provider interaction.”

The NIST definition lists five essential characteristics of cloud computing:

- on-demand, self-service,

- broad network access,

- resource pooling,

- rapid elasticity or expansion,

- measured service.

It also lists three “service models” (software, platform, and infrastructure), and four “deployment models” (private cloud, community, public, and hybrid) that together categorize ways to deliver cloud services”.

Difference with SDCC

By comparing the earlier definitions and architecture of SDDC with that of Cloud it is clear that SDDC focuses more on the architecture and defining the standard interfaces ( for example datacenter abstraction layer) while cloud is more focused on the services and capabilities. However, if you read the definition of cloud clearly, there is nothing in this definition that the SDDC is not able to provide.

Therefore we can say that “Software-defined data center (SDDC) provides the components and architecture to build a cloud. Further, Open SDDC is one way to build the cloud. But there could be other ways to build the cloud, for example, any vendor-specific architecture.

To make it simple we use “SDDC” to build “cloud”

Its your turn to tell me what is your understanding of SDDC and cloud? and do you agree with this way of explanation?

Very concise explanation and makes perfect sense. if I were to differentiate b/w the two, I would elaborate difference by focusing on the definition of the cloud “on-demand network access to shared pool”. While we use SDCC to build cloud. how much of our SDCC is measured on scale of “Cloudification”, would be determined by extent and flexibility of network access. that’s how I would differentiate b/w SDCC (private/community, custom made to support specific Apps) to hyper-scalers such as AWS, Azure and google cloud.

Very interesting perspective Zahid !, thank you for sharing your thoughts

SDDC is misspelled as SDCC in the beginning of the article.

Thank you so much Muthu, corrected now

Interesting read Faisal but I believe the confusion will still remain for people who don’t have a system hands-on over the cloud. I would have put my understanding as follows –

Cloud can be broadly classified into 2 categories – Public & Private

Both of them are build using the SDDC approach.

This SDDC building approach is visible to the customer in the case of a private cloud but not transparent in public clouds where everything is just a service.

Additionally – All Telco clouds (as of now) fall under the private cloud branch & build using the same SDDC approach.

Interesting comment @Asad khan , thanks for sharing your thoughts…. This is a good perspective. Agreed !

@faisal – Although on a small level but I started a small video series on cloud & related technologies on LinkedIn. One of the video links is here. Kindly go through it whenever you have time & I will be really happy to have a feedback

https://www.linkedin.com/posts/asad-khan-0585a812_creativesummaries-openstack-kubernetes-activity-6821673534981570560-pCpM